Ofsted: the problem of the ignorant inspector

My argument in this post is that Ofsted inspectors who are not specialists in a subject are poorly placed to make judgements about whether progress is being made by pupils in that subject.

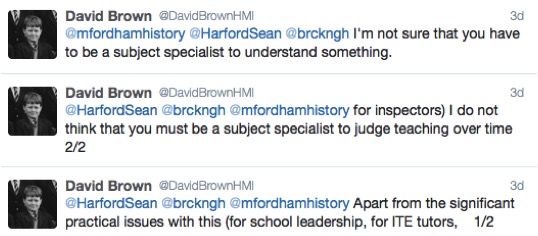

I am writing this in response to some comments made on Twitter by a HMI (HMIs being the senior inspectors employed directly by Ofsted).

The overall thrust of David’s argument here is that someone does not have to be a specialist in a subject in order to judge teaching over time. This is a very good example of something that I am increasingly calling ‘genericism’, the idea that that ‘teaching is teaching’ and ‘learning is learning’ regardless of the subject being taught.

I do not know if David Brown HMI is giving the official line here but the Ofsted Inspection Handbook implicitly concurs with his views by stating a team of inspectors (all of whom would not be non-specialists in most of what they inspect) has to do the following:

“Through lesson observations and subsequent discussions with senior staff and teachers, inspectors should ensure that they:

- judge the accuracy of teachers’ and leaders’ evaluation of the quality of teaching and learning

- gather evidence about how well individual pupils and particular groups of pupils are learning, gaining knowledge and understanding, and making progress, including those who have special educational needs, those who are disadvantaged and the most able

- collect sufficient evidence to support detailed and specific recommendations about any improvements needed to teaching and learning, behaviour and safety, and leadership and management.”

Although individual lessons are absolutely not to be graded and any judgement reached has to draw on evidence from a wide range of observations, the whole process depends on the assumption that non-specialist inspectors are able to make judgements about the quality of pupils’ learning.

I would argue that this is simply not possible.

In order to make a judgement about the quality of pupils’ learning and the extent to which ‘progress’ has been made, an inspector would need to know things like the following:

- What would good answers to the questions being asked look like?

- What would a poor answer or a misconception look like?

- What would something look like if it had been learned badly?

- What would a pupil need to know by the end of this lesson in order to make sense of the next lesson? Or the next term? Or year?

- What would be an appropriate level of simplification? – e.g. of ‘feudalism’ or ‘electron shell’

- What might a typical pupil of this age or prior attainment be expected to know about this subject?

These are all fairly obvious things: in order to know whether a pupil is making progress, I need to know what they are making progress towards and if I do not know what the ‘gold standard’ looks like in a subject then I am poorly placed to judge whether any progress has been made or not.

It has I think long been recognised that this is a problem in subjects like languages or mathematics. If an inspector speaks no German, they can have absolutely no idea whether pupils are being taught well or badly because they cannot judge the quality of pupil work, whether through what they say in observations or through work scrutiny. Similarly, an inspector who does not know the difference between the sine rule and the cosine rule is poorly placed to judge whether pupils have mastered these ideas fluently.

The problem, however, lies not just with mathematics or languages: it is a problem across all subjects. An example here is illustrative. Let’s say that an inspector walks in to a history lesson and sees the question on the board ‘Why did the English Civil War begin in 1642?’ The inspector spends half an hour watching the lesson in which pupils do some reading, have a paired discussion and then write a short paragraph in their books, before some whole-class questioning. In this a pupil (identified on the lesson plan as average ability) states something along the lines of

Religion clearly played a role in the causes of the English Civil War. Had it not been for the religious factors, Charles’ disagreements with Parliament would not have reached a point where war broke out.

If you are reading this as a history teacher, you are probably thinking something along the lines of ‘well, that answer makes no reference to Laud’s reforms and the introduction of the Prayer Book in England and in Scotland, nor does it refer to how Charles’ religious reforms helped spark the Bishops’ Wars which resulted in Charles recalling Parliament and beginning the set of events that led to the outbreak of the conflict.’ Perhaps you are looking at that response and thinking ‘that’s not a particularly good counterfactual explanation’. An inspector who does not know anything about Laud’s reforms or counterfactual explanations is not in a position to judge whether or not this pupil has learnt anything: as an inspector does not know what he does not know, he also cannot know what a pupil does not know.

The problem here rests not just with observations, but with all aspects of an inspection that are designed to reach judgements about the quality of pupil learning and the extent to which ‘progress’ has been made. A non-specialist inspector cannot know whether or not a pupil’s work in their book is good or not, nor whether a teacher’s feedback is appropriate for a pupil at that particular stage. A judgement about the validity of progress data is meaningful only if an inspector has confidence in the assessments being used to measure pupil learning and a non-specialist cannot know whether the test and mark-scheme created by, say, a geography department is good or not unless they know (a) the geography being tested and (b) how examinations in geography are constructed.

Without specialist knowledge on which to make judgements about the quality of learning in a subject, non-specialist inspectors have to fall back on proxies such as ‘are pupils engaged’, ‘do pupils think they are learning’ and ‘is feedback being given’ all of which are poor measures of whether or not pupils have actually learnt anything about a subject.

I have no issue at all with non-specialist inspectors judging generic things like behaviour. It is clear to me, however, that non-specialist inspectors have very little to go on when judging the quality of learning in any particular subject as they (1) do not know the subject themselves, (2) do not know what ‘good’ and ‘bad’ learning looks like in that subject and (3) have no sense of what would be an appropriate level of knowledge to expect of pupils of certain ages or prior levels of attainment.

Ignorance is not something of which someone ought to be ashamed: I am ignorant of a wide range of subjects (I got picked up by a science-teacher friend last week for saying that stars were ‘burning’). We ought, however, to be aware of our own ignorance and areas of expertise, and not try to be something we are not. A non-specialist is poorly placed to make judgements about whether or not pupils are getting better at a subject, and this elephant in the room has not received as much attention as it needs in recent criticisms of our current inspection regime.

The issue of judging quality of teaching is so much wider, I fear. The questions about an observed lesson, offered above, all require a certain subjective response. This is the problem with judging teachers and teaching or learning. Specialism enables you to see more deeply as an observer, and recognise inaccuracies of delivery, missed opportunities and fully understand what you see. Non-specialists bring a useful element of objectivity – sometimes an affiliation with the recipient learners. I find it useful to be a specialist in some situations, but in others I find it clouds my view, making me a little obsessive. Sometimes, in paired observation, I defer to another in pedagogy of a certain subject, and if alone I will factor that into my observation. It is still possible to draw conclusions about learning and teaching if one has adequate experience. With or without specialism, a measured approach is vital, with no assumptions. The same arguments can be had about specialist/non specialist teachers. Being a subject specialist does not always make you the best teacher, in my view.

I agree with your first few points about judging teaching always being a subjective process – this is why single-lesson observations (for example) are a poor way of managing teacher performance.

I disagree that non-specialists bring something additional to the mix – the difference (by definition) between a non-specialist and a specialist is that the former lacks knowledge that the latter has – it is hard to see how objectivity of judgement of learning comes from ignorance of the thing being learned!

I also disagree on the last point – it is hard to see how knowing less about the thing being taught can make you a better teacher of the thing being taught. This doesn’t imply that good knowledge of the thing being taught alone makes you a good teacher, but it is a necessary condition.

Reblogged this on The Echo Chamber.

This appears to be a no-brainer…yet, I do not know what an Inspection is trying to achieve and how it would be best to go about it…as I’m not an expert in inspections. Who is? (I get the impression that the high expectations that are becoming evident in the teaching realm are starting to appear with respect to inspections and accountability?)…..

By now, we all should be aware that subject knowledge is so low on inspectors’ priorities that the average HMI would probably be mystified by these comments. At the moment I am researching personalised learning, and all the sources are overwhelmingly concerned with process of learning and apparently completely indifferent to what children actually learn–so long as they ‘own’ their learning. It is curious that Michael Gove endorsed personalised learning, apparently oblivious to its implications.