Where have progression models in history gone wrong?

The dominant approach at present to defining progression in learning history is to make use of ‘disciplinary’ or ‘second-order’ concepts such as ‘causation’, ‘change’ and ‘evidence’. Sometimes this gets construed as a form of thinking (e.g. ‘causal reasoning’ or ‘evidential thinking’), and at others as a set of skills to be practised. Now I do not want for one moment to suggest that these concepts are not important: to the contrary, getting better at history means learning a great deal about different forms of causal argument, about ways in which change and continuity can be held in tension in narrative or how different types of source might be interrogated to provide evidence that can be deployed in argument. What I do want to suggest, however, is that the process by which these disciplinary concepts have been elicited from the discipline and converted into progression models relies on a flawed logic which has delivered no end of headaches to the field.

I am going to be making use of an analogy in this post, and I want to put it on the table now for you to ponder a little as you read the rest of it. Imagine for a moment getting together all of the ingredients for baking a cake: flour, eggs, sugar, spices, colouring, and so on. Now imagine baking your cake and, after admiring it, cutting it into slices of different shapes and sizes. Finally, imagine taking photos of those slices and showing them to students in cookery classes and telling them that each slice is an ingredient of the cake. Take a pause to get that little story clear in your mind, for we shall be returning to it shortly.

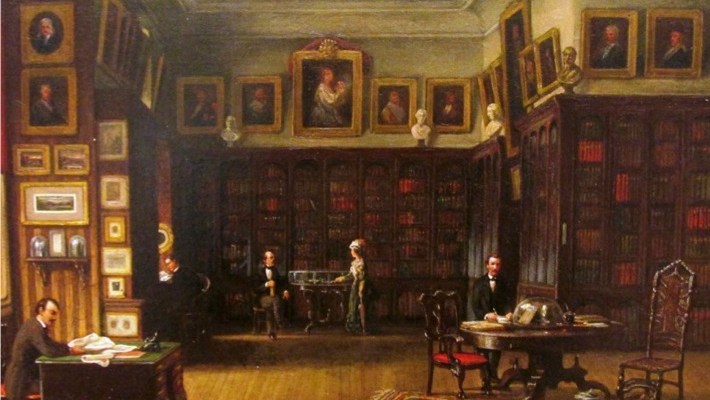

Back to the history. Let’s take a crucial component of the discipline: the way a historian uses his or her sources. This process is not always made particularly visible, but you certainly get a keen sense of what a historian is doing by listening to a research seminar or reading a paper in a scholarly publication such as the English Historical Review or Past & Present. I must have read and heard hundreds of these over the last fifteen years, and the following list is a flavour of some of the things that historians have known about their sources.

- forms of address used in letters in the fifteenth century

- different theories concerning how one discerns authorial intention

- common errors made in producing copies of manuscripts

- archiving conventions at the National Archives in Kew

- how census data were collected in 1801

- common abbreviations used in baptism records

- referencing conventions for citing a collection of unpublished letters

- the shapes of letters in Carolingian Minuscule

- Anglo-Saxon law codes produced at the time a source was written

- the way the meaning of the word ‘liberty’ has changed over time

- appropriate vocabulary used by historians to convey uncertainty

- the intended audience of Papal Bulls

- the political affiliation of an eighteenth-century newspaper

- Archbishop Parker’s motivation for collecting manuscripts in the sixteenth century

If all of this sounds remarkably specific, then I think this tells us something about the practice of the discipline. When one reads research papers in a particular historical field, one finds them littered with these kinds of details. Historians know many such details about their sources, and knit this knowledge together in all sorts of interesting, complex and sometimes novel ways. The finished product is of course a work of historical scholarship.

Now herein lies the problem. Because so many of these features are quite specific to what is being researched, it becomes difficult to produce general statements about the nature of historical practice. This frustrates two groups of people who have a particular desire to produce such generalisations: philosophers of history and curriculum theorists. Philosophers of history want to find generalisable statements about historical practice because they want to identify what is essential to the discipline – i.e. are there some things that all or most historians have in common? Curriculum theorists want generalisable statements about the discipline because they want to establish a clear set of curricular aims for students. Such philosophers and theorists are thus incentivised to find a generic language that can be used to describe historical practice in a range of contexts.

This language will be familiar to anyone who has read works in philosophy of history (e.g. Carr’s What is history? or Megill’s Historical Knowledge, Historical Error) or history curriculum theory (e.g. Seixas’ The Big Six). To stick with the use of sources for a moment, we use terms to describe what historians do such as “assessing the credibility of an author” or “establishing the typicality of a belief”. We might reasonably state that historians establish the ‘reliability’ of their sources or ‘make inferences’. All of these descriptions are true. They are generic statements about historical practice, and are thus suitable for making generic descriptions about the discipline.

The problem comes when we, as curriculum theorists, then take the next step, and convert these generic descriptions of historical practice into a progression model. Having established that historians “assess the credibility of an author”, we then try to break this down into smaller components that can be learnt by a novice. Perhaps under pressure from accountability frameworks, we might try to turn these components into a hierarchy, and thus show how some aspects of “assessing credibility” are easier or harder. Some theorists have put a great deal of work into producing these models, finding more and more precise ways to define the components of things such as ‘evidential thinking’ or ‘causal reasoning’. The UK 1991 National Curriculum is an excellent case in point, though there are plenty of current examples available.

Hopefully you have not forgotten the cake, and perhaps you have now seen where I am going with the analogy. Historians rely on myriad of (often period-specific) knowledge – and I take this to incorporate know-that and know-how – in order to construct their works of scholarship, in the same way as a chef combines ingredients to bake a cake. What philosophers of history and curriculum theorists do is construct a language to describe the cake in its finished form, and which allows them to compare one cake with another. This is fine when left at the generic level: I have no quarrel here with the philosophy of history. The problem arises when these generic descriptions of disciplinary practice get broken down into components in order to create a progression model for learning the subject. The cake is taken and cut up using the generic tools, and then the slices get treated as the ingredients to be learnt.

The problem, of course, is that mastering the slices is not what allowed the historian to create the scholarship in the first place. Generic language for describing disciplinary practice stops being meaningful when used to describe the specific things that a historian had to learn to produce a work of history. In order for a novice to learn to produce history, he or she must master a number of things similar in nature to those in my list above. Whilst it is not incorrect to use generic language to define the emergent properties of disciplinary products (i.e. works of scholarship), that language has little use in defining the curriculum that a novice needs to study.

This is one area in which progression models in history education have taken us in the wrong direction, but so well-established is this approach that it is difficult to think about progression without falling into the same trap. The escape, I think, comes not in rejecting the generic language of practice completely, for these descriptions are meaningful at the general level. Instead, we need to break from the constraints of the generic in our approaches to curriculum design that are cognisant of what it means to get better at the discipline. I intend to return to this matter in a future post.

How times have changed. As an undergraduate History student at UEA between 1989 and 1993, we read Carr and Elton in our first term, and they were never referred to later. We seldom saw original sources (other than quotes in secondary works or heavily annotated tracts) except in our special subject; even then, the few we read were written by central actors of the time, so we already had a pretty good context.

Asking school students to read original documents is, I believe, ludicrous. This is really the realm of postgraduate studies, where one knows enough about a narrow subject to appreciate the significance of the kind of questions enumerated above. But it’s even more difficult than that: As a mature student, I had a huge advantage over those coming straight from 6th form in terms of what I already knew–yet I still found myself handicapped when reading original sources because I lack even the rudiments of a classical education, which were an essential element of the education of 18th century gentlemen. Needless to say, today’s schoolchildren lack even the smattering that I picked up along the way.

Of course, all subjects have suffered from educators’ delusion that we can just forget the basics and go straight on to advanced behaviours. This was the delusion behind the ‘real books’ craze of the 1970s and 1980s, which left tens of millions of people all but illiterate before psychologists proved that everything taught by Goodman and Smith was just plain wrong.

The lecturer for my special subject recounted the tedium of studying for his PhD on Robert Harley. If memory serves, his papers were at the British Library, and most of them had never been catalogued. He said that nothing was more demoralising than to find that the box he was presented with in the morning contained nothing more interesting than his laundry lists.

These for me are simply issues of epistemology.

I would simply take photographs of the ingredients and also of the cake slices. I would explain that when combined, the ingredients produce the cake. There is no need to tell anyone that the slices are the ingredients.

Much of your discussion is simply about novices and experts. Willingham for instance talks about not teaching students of science to be science experts, or scientists. If I remember correctly he may also have talked about Historians.

You seem to conflate the whole epistemology thing with the novice / expert thing and suggest that the result is not a good basis for planning progression.

I believe that in most if not all subjects, learners tend to develop (construct) a working knowledge that allows them to solve real world problems. Specialists (e.g. Historians, Scientists, Linguists ) tend to develop a deeper understanding within a narrower range of concepts and become experts. The issue of whether the novice/expert thing is a continuum or binary issue/process is for me an interesting one and relevant here I think.

It seems to me that you describe creating experts who are at one and the same time generalists and therefore your dilemma of progression appears.

If one looks at the thing from the perspective of the learner and the concept then I believe the thing becomes quite straightforward. You are, for me, trying to be all things to all men and then you are surprised when the model doesn’t work elegantly.

This is a fascinating post which I will reread along with Tom’s comment and any others that are forthcoming.

Reblogged this on The Echo Chamber.