Are the Teachers’ Standards fit for purpose?

I must begin on a positive. The whole profession has been crying out for years that the training period teachers have is not sufficient – indeed, one of my very first blog posts, back in 2013, was on this very issue. The good news is that the government has just opened a consultation on creating a more structured training programme for new teachers that goes beyond a single year. The details on this will of course be what matters, particularly if we are to avoid this just becoming a rebranding of NQT induction, but the overall direction is nevertheless a good one.

In this post, however, I want to use this development to raise a concern. The concern I have is with the Teachers’ Standards, the set of criteria that we use to judge whether someone is eligible for Qualified Teacher Status. At present we just have one set of standards, but the new model under consultation suggests having two set: one that has to be met by the end of the first training year (QTS(P)), and another ‘more robust’ set that has to be met by the end of the second training year (QTS). This carries the clear implication of ‘progression’ from one to the other: the second set of standards will have to assess a higher level of proficiency than the first set.

Before going any further, I am assuming that readers of this post are already familiar with the criticisms that exist of using descriptive criteria to measure ‘progress’ (i.e. getting better at something). If you are not, then a very quick introduction is this blog post by Daisy Christodoulou, and you can read a greater elaboration on the ideas there in her book Making Good Progress.

In a nutshell, there are two intractable problems with descriptive criteria. The first is that they are subjective and require interpretation to be used in practice: given that criteria are supposed to help provide a common shared meaning beyond specific contexts, this is a problem, although one that can be mitigated to some extent by moderation and sharing examples. The second is that they rely heavily on verbs that then have to be modified in order to show progression towards and beyond the criterion. For example, one bullet point in Teachers’ Standard 2 states:

- be aware of pupils’ capabilities and their prior knowledge…

What does it look like when someone gets better at this thing. Should it be the case that, at the end of their training year, we want trainees to ‘be aware of pupils’ capabilities’, and that at the end of their NQT year they are ‘more aware of pupils’ capabilities’? How can you distinguish between ‘aware’ and ‘more aware’. Or do we say that, in the first year, teachers are “starting to be aware of pupils’ capabilities” and that this ‘starting’ has come to an end by their second year?

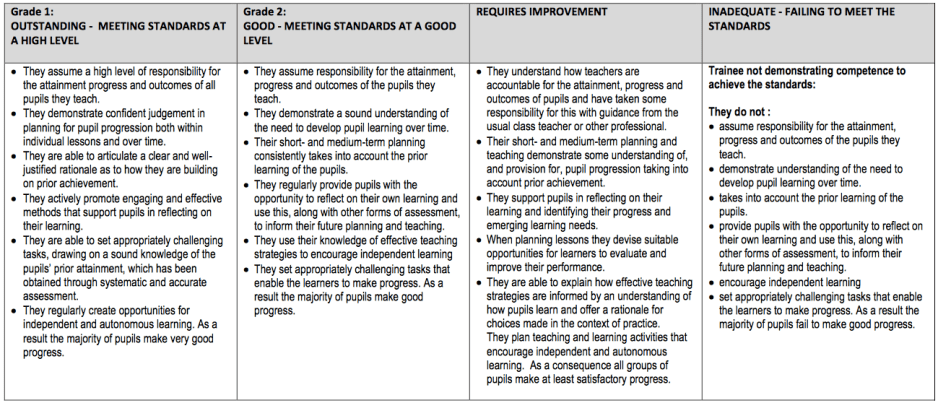

If this sounds comic, then that’s because it is, although tragically so in that this is something that ITE providers have had to do for years. Ofsted requires that ITE providers demonstrate that the majority of trainees exceed the Teachers’ Standards. This means that providers have been forced to produce progression models that take the Standards and imagine what a harder version of each standard looks like. Here is a very typical example – I am not mentioning which provider it is, as this is not intended to be a criticism of providers, but rather the system under which they operate.

As you read across from right to left, you can see the attempts to define ‘progress’ (i.e. ‘getting better at teaching’). As you read through, keep in mind that someone has to use these criteria to judge a trainee, someone has to moderate that judgement, and a trainee will get a number telling him or her how good they are at teaching. So what does this ‘getting better’ look like? Let’s take a look at the first bullet point, which moves from:

- take some responsibility

- assume responsibility

- assume a high-level of responsibility

What it means to ‘take responsibility’ is already a fairly vague notion, but this is exacerbated by then requiring assessors to distinguish between ‘responsibility’ and ‘high-level of responsibility’. Let’s take another example. Trainees are supposed to go from:

- They support pupils in reflecting on their learning and identifying their progress and emerging learning needs.

- They regularly provide pupils with the opportunity to reflect on their own learning and use this, along with other forms of assessment, to inform their future planning and teaching.

- They actively promote engaging and effective methods that support pupils in reflecting on their learning.

Keep in mind that the difference between the first bullet point and the third here is, supposedly, the difference between an ‘Outstanding’ trainee and one that ‘Requires Improvement’. I do not want to labour the point, but take a look at this example from Teachers’ Standard 2:

- They are astutely aware of their own development needs in relation to extending and updating their subject, curriculum and pedagogical knowledge in their early career and have been proactive in developing these effectively during their training.

- They are critically aware of the need to extend and update their subject, curriculum and pedagogical knowledge and know how to employ appropriate professional development strategies to further develop these in their early career.

Is someone who is ‘astutely aware’ doing better or worse than someone who is ‘critically aware’? How do we decide that someone has been sufficiently ‘proactive’?

These issues are now well-known in assessing children in different subjects in school, although the system as a whole is really only beginning to develop ways to move on from the limitations of descriptive criteria. It would, however, be a great irony if we adopted a system of assessing teachers that is based on a flawed assessment model that those very same teachers are moving away from when teaching children.

So here is the problem. We are about to move to a situation where we have two sets of Teachers’ Standards: one for the end of the first training year and one for the end of the second training year. The latter will need to demonstrate ‘progress’ from the former. A group of intelligent and experienced people will be sitting down in a meeting room in the DfE to write them. And then these will be launched for providers and schools across the land to use.

My question is: how can this group avoid falling foul of the inherent problems associated with using descriptive criteria? I have seen group after group of intelligent, knowledgeable and experienced history teachers hit a brick wall in this process. A brief search for ITE grading criteria will show that groups of intelligent, knowledgeable and experienced teacher educators have also hit brick walls, of which the example in this blog post is typical. This all suggests to me that our problems here do not stem from a lack of intelligence, knowledge or experience. If it were possible to use generic descriptive criteria to model progress, then I think we surely would by now have worked out how to do it.

I think, rather, that the problem here is that the very model itself does not work.

Let’s just take your first example from the Teachers’ Standard: teacher should “be aware of pupils’ capabilities and their prior knowledge”. When I was first engaged as an unqualified teacher of literacy skills by a Norwich comp, the the first thing I did was adminster standardised tests of reading and spelling to all SEN pupils. On top of that, I convinced the Head of Geography (who was responisible for my being engaged) to administer the non-verbal reasoning test from the Children’s Ability Scales to our intake.

All this was quick and painless, and it enabled us to group pupils for instruction very efficiently and accurately. However, I’m sure this isn’t what the authors of the Teachers’ Standard had in mind; I’ll wager they were thinking of journeys to the top of Bloom’s pyramid. Never mind that AfL has been with us for over 15 years, and as Prof Robert Coe has lamented, there is no evidence whatever that this has improved our pupils’ academic achievement. Yet what I did also enabled me to demonstrate that nearly all of our pupils had indeed made very good progress in learning to decode and spell. And on top of this, the pupil with the highest score (out of 140 pupils) on the non-verbal reasoning test was around the second percentile in reading and spelling, and unsurprisingly was also on the SEN register for behaviour. He lived in social housing, and any number of teachers and SEN advisers in primary school had merely written him off. I’m pleased to say that with a lot of help from his mother, he caught up by the time he reached KS4. Of course, by this time he’d missed out on most of his schooling.

I’ll be the first to admit that it takes a lot of training to survive in the bizarre system created by our betters. However, my only prior training was a weekend instructors’ course in the TA. Admittedly, I was later sent on a two-week Signals Instructors’ course, but that was to learn about signals, not the Army’s Methods of Instruction. The time I spent in the education stacks in the UEA Library whilst studying English History no doubt helped me understand that most educators sneer at the idea that schools should be transmitting knowledge and useful skills (like spelling), but it did nothing to prepare me for the vast amounts of meaningless data that teachers are expected to produce. Fortunately, my Senco wrote all the statement reviews and IEPs (as they were then called), so all I had to do was teach. Hence, I went home at 3:15 along with the pupils, and I really enjoyed teaching.

Sadly, it has got a lot worse since then. And we now have a workforce that is so inured to the kind of nonsense contained in the Teachers’ Standards that they have no concept of what it’s like when you only need to know your subject and use a bit of common sense. Teaching the young is one of the most basic human instincts, and it’s remarkable how professional educators have managed to pervert this.

Thought provoking as always.

Perhaps a useful thought experiment here is to ask the reverse question. What would ITE look like with no prose descriptors? Daisy argues that it is important we have a progression model. What is the alternative model?

Working as a teacher trainer we talk regularly with our trainees about how to improve – Daisy’s shared meaning. Absent any descriptors, we could use comparative judgement (expert other teachers?). But this does not provide a map of how to get there. And one could also argue there is more than one picture of what good looks like.

So surely it is a combination of descriptora and CJ that provides a way forward here. If I add value to my trainees it is perhaps largely because I sit in 20 lessons in 7 schools and a variety of subjects. Adding this to the ruberic provides a stronger basis for conversations about what good looks like.

But I’d love to hear your thoughts on progression models (I read your TF talk with interest) and teacher assessment (not least as I am about to write on this issue).

Agree with these criticisms. The major issue with the Standards is that they have all the issues of levels when turned into a progression model like this. In actual fact, the scope under each standard is supposed to represent the kinds of things that trainees might do to demonstrate meeting AND exceeding the standards, yet nobody seems to have grasped this. Equally there is a real problem of the weighting of the scope within a standard and to what extent different aspects of a standard contribute to a judgement. Ultimately it is all a bit of a mess, even if I think the general statements themselves are not overly problematic.

I mark UG BSc assignments using a rubric that has 5 rows. Across each row are statements that vary only by the adjective used (exceptional/comprehensive/good/competent/acceptable/some/very little…knowledge of… principles/concepts/methods of enquiry). These adjectival descriptors might as well be replaced with a 1-7 Likert scale. However, the rows are useful because they draw my attention to the strands that, as an academic team, we consider to be important. For example, the first row is ‘knowledge of principles/concepts/methods of enquiry’ whereas the second row is ‘ability to apply principles/concepts/methods of enquiry outside the area in which they were studied’. When I’m marking I use a mental model based on all the other assignments I’ve marked and an idea about the expected spread of grades, to determine the 1-7 standard, but I use the rubric to help ensure I take account of all 5 strands. The rubric I have to use for marking PGCE assignments at M-Level also has rows that correspond to strands that we consider important, but this time the statements across each row are sometimes more helpful in determining the mark. For example, there is a fairly clear difference between “You have consulted a range of source material” and “You have summarised and used major relevant sources in a relevant manner.” There is still an essential need to have a mental model based on moderation work with colleagues but this time I think the descriptors help somewhat to keep the levels anchored. So, as a general point about rubrics I think they are never sufficient, and always rely on a mental model based on a moderation process, but all rubrics help to remind an assessor of what is considered important, and some help to anchor the mental model as well.

Turning to assessment for QTS, I think the process is similar. I tweeted a link to the NASBITT rubric the other day, and I think it is a rubric mostly of the first type – like the one you have used in this blog. Ours is more like the second type. For example I think statements such as “planning fails to show awareness of common misconceptions”, “planning shows awareness of common misconceptions”, “teaching shows evidence of addressing common misconceptions as they occur” are at least a little bit helpful in assessing trainee teachers. However, in the end, I am comparing trainee teachers with the most informed mental model I can manage of what good teaching is like.

Echoing Ed’s comment above, I wonder if a rubric is the worst way to assess QTS(P) apart from all the others. Do you have a preferred suggestion? VA is out because even if this was reliable for individual teachers (and it’s not) most trainee teachers don’t produce the necessary data. There might be a role for exams in assessing limited domains of knowledge but the US example isn’t encouraging and I don’t believe the trainee teachers that will do best in such exams are reliably going to be those who perform best in the classroom. CJ is not logistically feasible, is it? Maybe some of the lesson observation protocols from the US have merit, but none have yet been shown to have the necessary reliability.

My view is that perhaps we ought to make a very concerted effort (nationally) to produce the best possible version (or maybe two or three versions) of the second type of rubric. Then we should sort out the stupid “exceeding the TSs” problem and set QTS(P) as meeting the TSs (‘old Grade 3’ if you like, without all the bending of standards that’s happening at the moment), and then base full QTS on some combination of teaching that is better than just scraping through the TS, and/or data that shows decent outcomes, and/or support from colleagues (HoD/HoY/SLT/etc) with evidence of commitment to professional development.

Reblogged this on The Echo Chamber.